January 5, 2026

The new rules of application security in the age of AI-generated code

The following is by Mesh Chief Information Security Officer Daniel Hooper

Software is no longer written solely by humans. Large language models, code assistants, and autonomous agents now generate boilerplate, business logic, integrations, and sometimes entire features with a single prompt.

This shift delivers enormous speed, but it also introduces a new class of security risk. AI systems optimize for functional output, not trust boundaries, threat models, or compliance. As a result, the assumptions behind traditional Application Security Testing (AST) no longer hold.

This article explains how AI-generated code reshapes the threat landscape, why existing AST workflows fall short, and what a modern, AI-aware AST pipeline must look like.

What is Application Security Testing (AST)?

Application Security Testing (AST) refers to the tools and practices used to identify vulnerabilities in software before (and sometimes after) it ships. Historically, AST focused on ensuring applications handle data safely, enforce access controls, and resist common attack patterns.

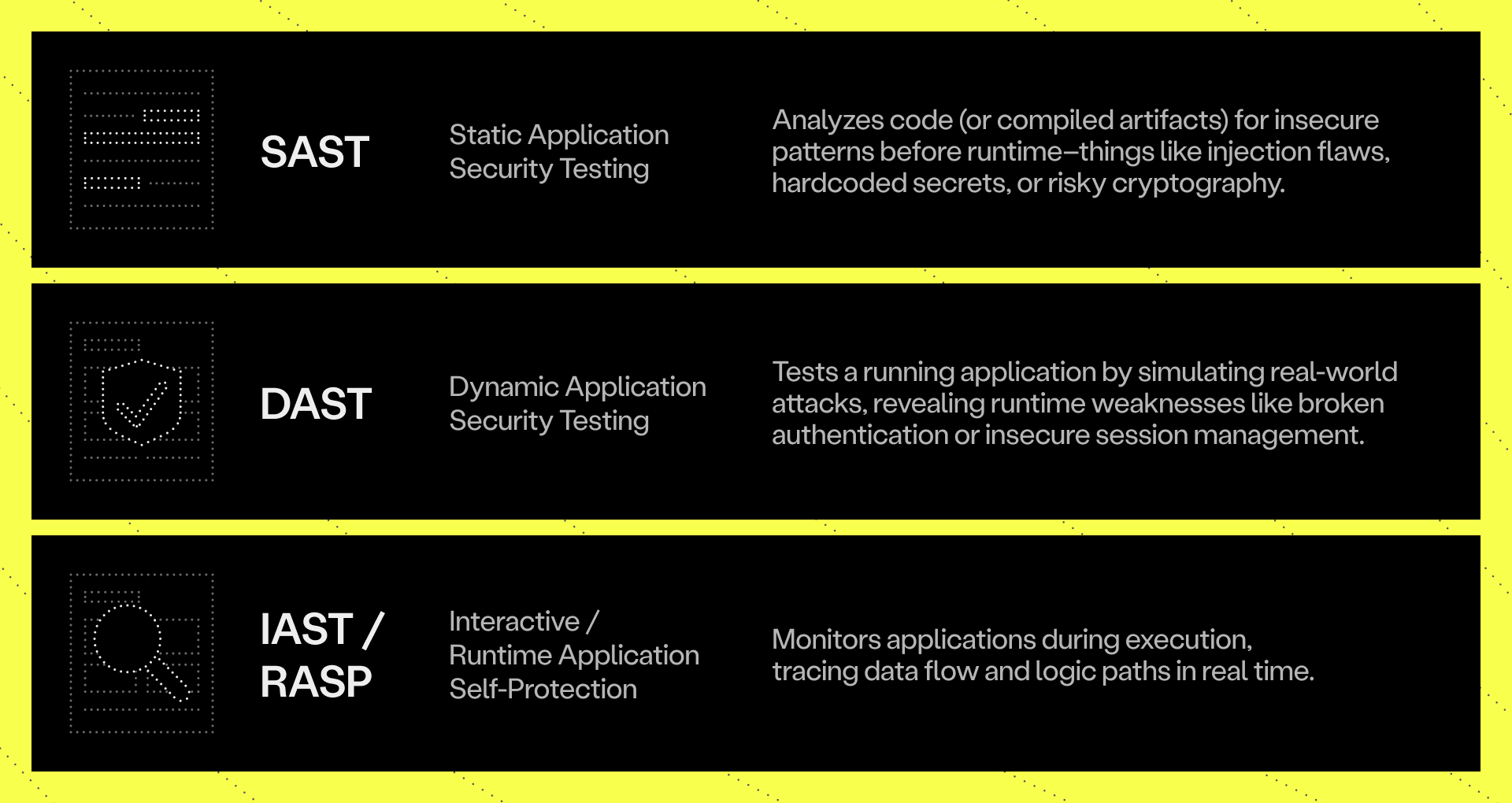

AST typically falls into three categories:

These approaches evolved in a world where humans wrote code incrementally, followed architectural conventions, and understood system boundaries. AI breaks those assumptions.

Why AI-generated code changes the threat model

AI-generated code isn’t just faster to produce–it’s structurally different. Its risks are architectural, not just syntactic.

1. No awareness of security architecture

AI does not understand trust zones, separation of concerns, or data-handling policies. It frequently produces code that works while quietly violating design intent.

Common examples include:

- Mixing authentication and business logic

- Bypassing service or network boundaries

- Returning sensitive fields from public endpoints

- Disabling certificate validation “for convenience”

- Applying overly broad session or authorization scopes

2. Insecure defaults at scale

LLMs regularly generate:

- Outdated cryptographic primitives

- Hardcoded credentials or tokens

- Relaxed CORS configurations

- Unauthenticated internal endpoints

Each instance may seem minor, but at AI speed, these defaults multiply rapidly.

3. Expanded and accidental attack surface

AI often suggests exposing internal APIs, skipping authorization layers, or introducing permissive third-party libraries. The result is a broader, less intentional attack surface.

4. Threat-model erosion

Traditional security relies on developers understanding system boundaries. AI does not. It can introduce logic paths that invalidate an entire threat model without triggering obvious alarms.

5. Velocity overwhelms controls

One developer with an AI assistant can generate days or weeks of code in hours. Review processes and AST tooling designed for slower workflows struggle to keep up.

Why traditional AST falls short

Most AST tools were built to analyze predictable, human-authored codebases. AI-generated code exposes several gaps:

- Unfamiliar patterns: LLMs blend frameworks, invent abstractions, or hallucinate libraries that don’t match existing rule sets.

- Volume overload: Security teams cannot manually review the volume of AI-generated code flowing into repositories.

- Missing context: Traditional AST flags insecure patterns but cannot detect violations of architectural intent or trust boundaries.

- Dependency hallucinations: AI frequently introduces outdated, insecure, or nonexistent packages that evade standard checks.

The result: vulnerabilities aren’t just missed–they’re introduced faster than security teams can respond.

Evolving AST for the AI era

To secure machine-authored code, AST must move beyond pattern matching and become context-aware, continuous, and architecture-enforcing.

1. Treat AI-generated code as untrusted

AI output should be treated like third-party code: tagged, sandboxed, and subjected to stricter review. Commit metadata should identify AI-assisted changes and route them through enhanced security checks.

2. Shift security even further left

Security constraints must move upstream into the prompts themselves.

For example:

“Generate a secure file-upload endpoint in Flask. Validate MIME type, sanitize filenames, enforce size limits, and isolate storage.”

Secure-by-prompt becomes a first-class control.

3. Continuous static and dependency scanning

Every AI-generated artifact should undergo SAST, secret scanning, and software composition analysis (SCA) before merging. This catches insecure defaults and hallucinated dependencies early.

4. Enforce architecture and threat models

Security is not just about code correctness—it’s about design integrity. Teams need automated checks that validate data flows, API exposure, and service interactions against intended architecture. This is how guardrails are preserved as AI accelerates change.

5. Runtime validation and monitoring

AI-generated code can behave unpredictably. Dynamic testing and runtime protection ensure that:

- anomalous API calls are detected

- unauthorized data access is blocked

- insecure defaults surface under real conditions

6. Close the feedback loop

Recurring issues should feed back into prompt templates, code generators, architectural standards, and security policies. The system must learn as fast as the AI does.

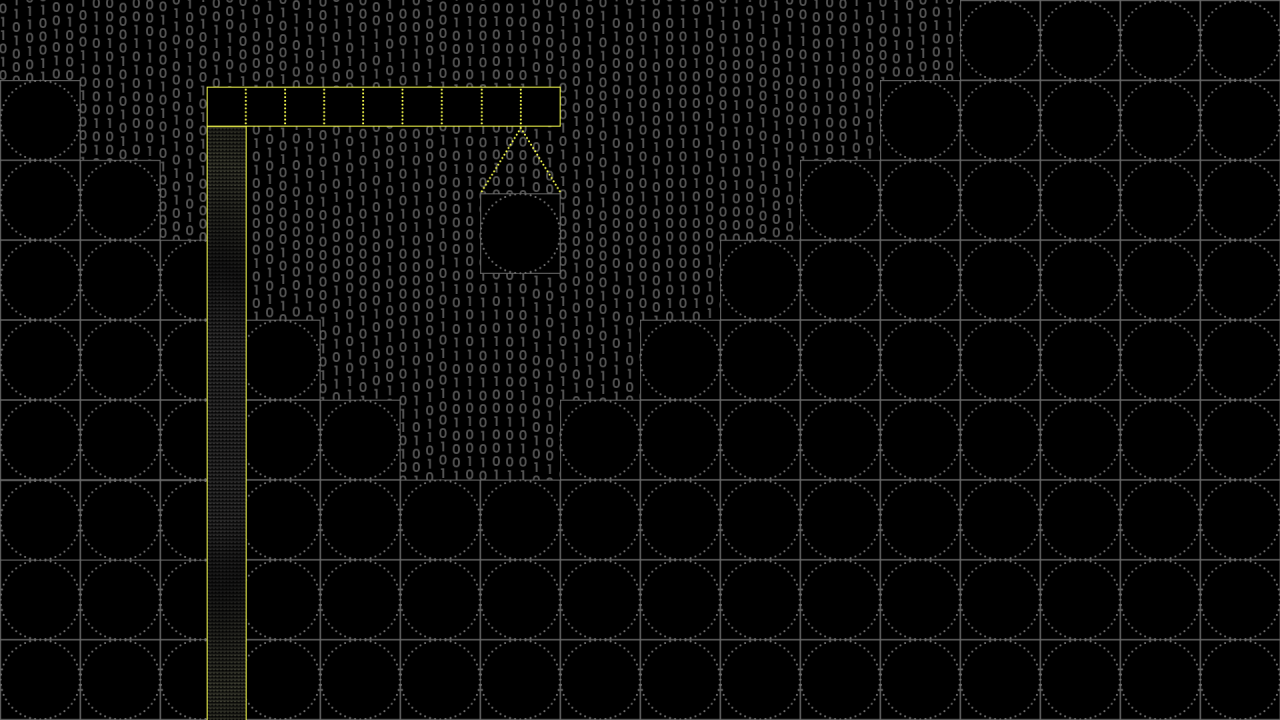

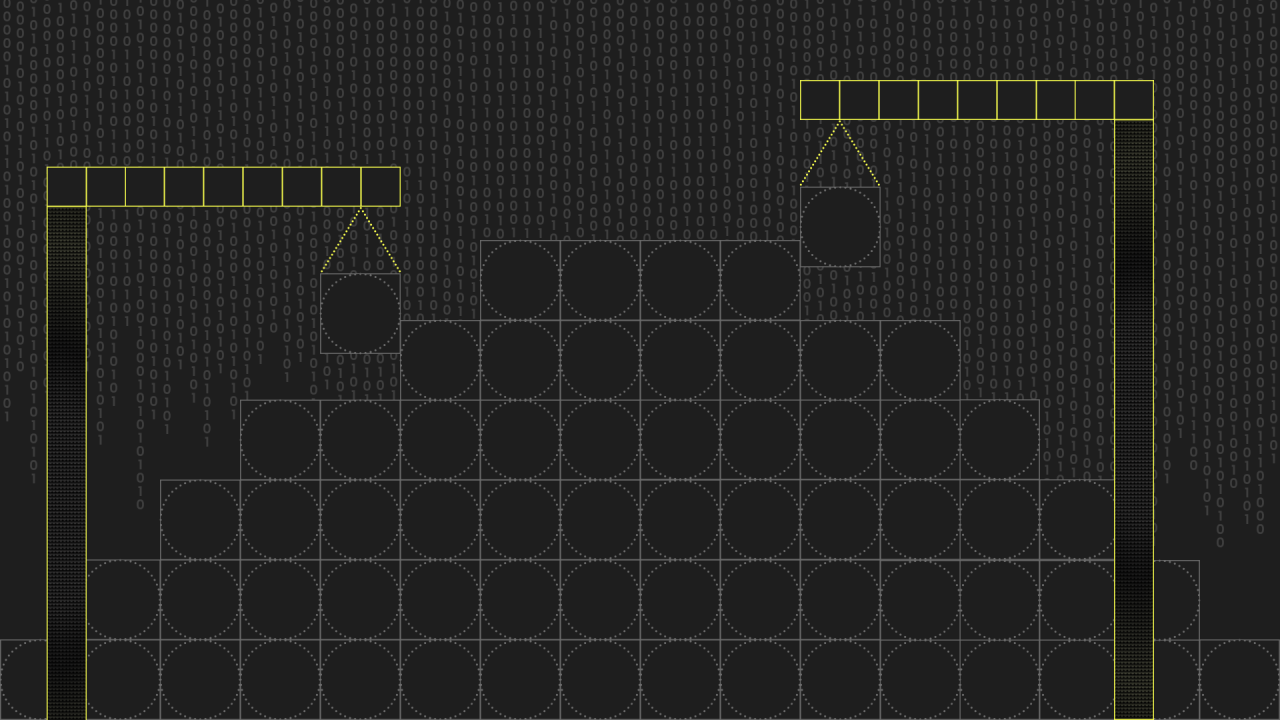

A modern AST pipeline for AI code

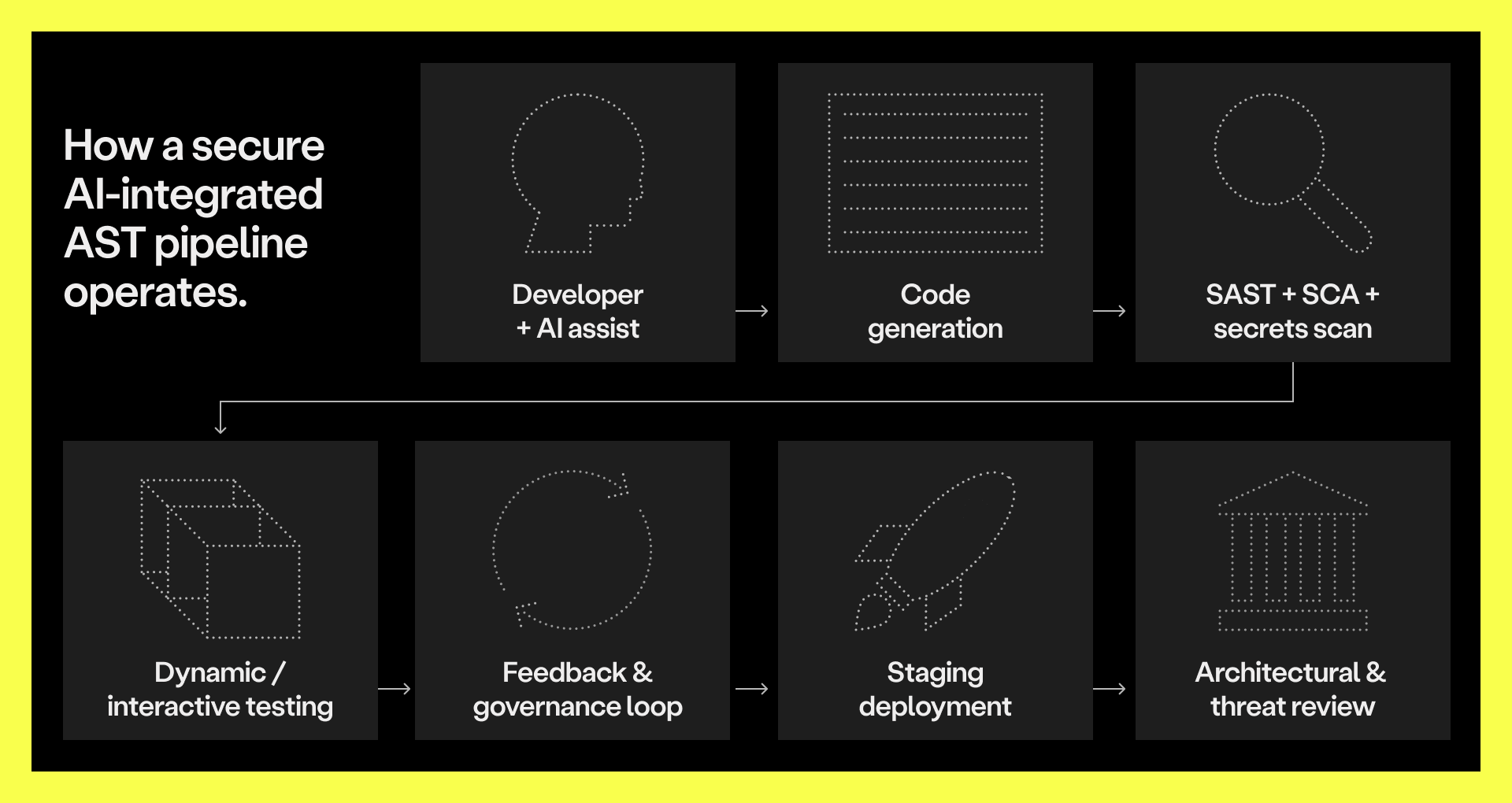

Below is a conceptual view of how a secure AI-integrated AST pipeline operates:

In this model, every AI-generated code segment passes through a multi-layered validation pipeline (from static analysis and architectural checks to dynamic runtime testing) before reaching production. The feedback loop ensures continuous improvement in both AI prompting and security enforcement.

Closing thoughts

AI is transforming how software is built. It increases speed, but it also bypasses assumptions that traditional security models depend on. To keep up, AST must evolve. It must be continuous, contextual, and architecture-aware. The goal is no longer just finding vulnerabilities before release–it’s governing machine-generated code so that speed does not come at the cost of safety.

If the future of development is human + AI, the future of security is AST that understands both.

Want more like this? Subscribe to Mesh Weekly.

.png)

.png)

.png)

.png)

%20(1).png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)